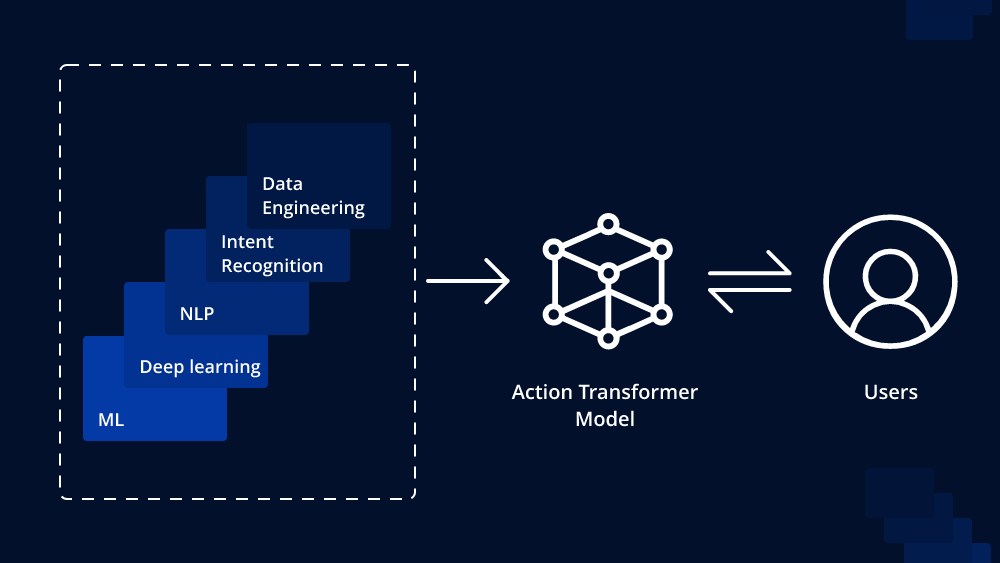

In the ever-evolving landscape of artificial intelligence and natural language processing, breakthroughs continue to redefine the boundaries of what machines can achieve. One such milestone is the advent of Action Transformer Models, a paradigm-shifting development that promises to revolutionize the field. With its remarkable ability to understand context and generate coherent responses, the Action Transformer Model is poised to enhance various applications, from chatbots to machine translation. In this article, we delve into the intricacies of this transformative technology and explore its potential to reshape the way we interact with machines.

The Evolution of Transformers

Before delving into the intricacies of Action Transformer Models, let’s briefly revisit the foundation upon which they are built—the Transformer architecture. Introduced in 2017 by Vaswani et al., the Transformer architecture represented a pivotal moment in the world of NLP. It introduced the concept of self-attention mechanisms, enabling models to consider the relationships between all words in a sentence simultaneously, rather than processing them sequentially. This parallel processing revolutionized the efficiency and performance of NLP models.

However, Transformers were initially designed for tasks like translation and language modeling. They excelled at capturing syntactic and semantic structures but struggled with more dynamic, context-dependent tasks. This limitation prompted researchers to explore innovative solutions, leading to the birth of Action Transformer Models.

Action Transformer Models: Understanding Context

Action Transformer Models, also known as Action-Conditional Transformers or Actor-Critic Transformers, extend the capabilities of traditional Transformers by incorporating action and context awareness. These models are specifically engineered to excel in tasks requiring a dynamic understanding of context and the ability to generate coherent, context-aware responses.

At the core of Action Transformer Models is the concept of “action.” In the context of these models, an action represents a decision or response generated by the model based on the given context. This action can vary from selecting the most appropriate word in a sentence to making high-level decisions in complex tasks like chatbots and reinforcement learning scenarios.

The Architecture of Action Transformer Models

The architecture of an Action Transformer Model typically consists of two key components: the actor and the critic. These components work in tandem to understand context and generate actions accordingly.

- The Actor: The actor component is responsible for generating actions. It takes as input the context, which can be a sequence of words or any relevant information, and produces actions based on its understanding of the context. The actor component is trained to make context-aware decisions and generate responses that are coherent and contextually relevant.

- The Critic: The critic component serves as the evaluator. It assesses the quality of the actions generated by the actor in the given context. This feedback loop enables the model to learn and improve its decision-making over time. The critic’s role is crucial in reinforcement learning scenarios, where the model must make sequential decisions while maximizing a certain reward.

Applications of Action Transformer Models

The versatility of Action Transformer Models opens the door to a wide range of applications across various domains:

- Conversational AI: Chatbots and virtual assistants can benefit greatly from Action Transformer Models. These models can maintain context throughout a conversation, resulting in more natural and coherent interactions.

- Machine Translation: Action Transformers can improve the quality of machine translation by considering the entire sentence context and generating more contextually accurate translations.

- Reinforcement Learning: In reinforcement learning tasks, such as game playing and autonomous navigation, Action Transformer Models can make sequential decisions that lead to better overall performance.

- Content Generation: Content generation tasks, including text summarization and story generation, can benefit from Action Transformers’ ability to maintain coherent context throughout a document.

Challenges and Future Directions

While Action Transformer Models represent a remarkable leap forward in NLP, they are not without challenges. One of the key challenges is the need for large amounts of data and computational resources for training. Additionally, fine-tuning these models for specific tasks can be a complex and resource-intensive process.

Looking ahead, researchers are actively working on addressing these challenges and exploring ways to make Action Transformer Models more efficient and accessible. Advances in transfer learning, model compression, and domain adaptation are expected to play a significant role in overcoming these obstacles.

Conclusion

Action Transformer Models mark a significant advancement in the field of natural language understanding. By imbuing models with the ability to understand context and generate context-aware responses, these models have the potential to transform how we interact with machines. Whether it’s in chatbots, machine translation, reinforcement learning, or content generation, the impact of Action Transformers is poised to reshape the landscape of AI and NLP. As researchers continue to refine these models and make them more accessible, we can anticipate even more exciting applications and breakthroughs in the near future. The era of context-aware AI is here, and it’s bound to leave a lasting mark on the world of technology and communication.

Leave a comment