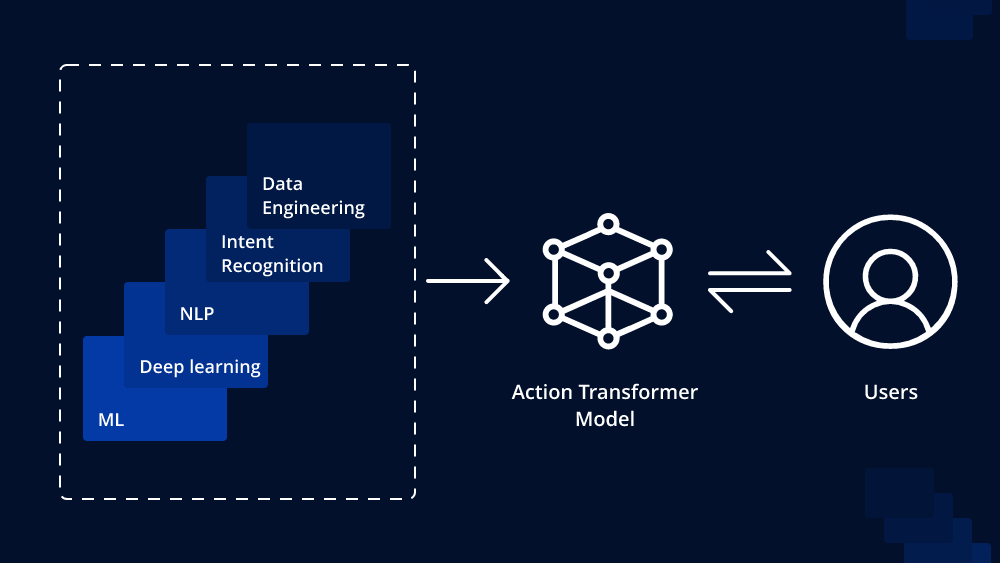

In recent years, transformer models have revolutionized the field of natural language processing (NLP) and achieved groundbreaking results in various NLP tasks. The transformer’s self-attention mechanism allows it to capture long-range dependencies effectively, making it highly effective in processing sequential data. One intriguing extension of the transformer architecture is the Action Transformer, which goes beyond traditional language modeling and enables the modeling of dynamic systems with actions and reactions. In this article, we will explore what an Action Transformer model is and how to implement one.

Understanding the Action Transformer Model:

The Action Transformer is an extension of the standard transformer model that incorporates actions and state changes into its framework. Instead of focusing solely on language modeling, the Action Transformer is designed to understand and predict the consequences of actions in a dynamic system. This makes it highly suitable for tasks involving sequential decision-making or real-world scenarios where actions lead to changes in the environment.

The core concept of the Action Transformer lies in encoding actions as special tokens in the input sequence. These action tokens carry information about the actions performed by agents or entities in the system. When processing the sequence, the Action Transformer’s attention mechanism learns to attend not only to the words in the input but also to these action tokens. This way, the model can understand the causal relationships between actions and their effects on the system’s state.

Implementing an Action Transformer:

To implement an Action Transformer, we will outline the necessary steps involved in modifying the standard transformer model.

1. Data Preparation:

As with any NLP task, the first step is to prepare the data. In the context of an Action Transformer, this involves formulating the input sequences with action tokens. Consider a scenario where we have agents performing actions in an environment. The input sequence could be constructed in the following format:

Agent 1: [ACTION] move left [ACTION] pick up object [ACTION] move right

Agent 2: [ACTION] move right [ACTION] move up [ACTION] push object

Here, [ACTION] denotes the action token, which informs the model about the actions taken by the respective agents.

2. Model Architecture:

The Action Transformer shares many architectural similarities with the standard transformer model. It consists of an encoder-decoder structure, where the encoder processes the input sequence and the decoder generates the output. However, in the Action Transformer, we also incorporate the actions into the encoder.

The encoder’s input embeddings are modified to include action embeddings, which are learned along with the word embeddings during training. This modification allows the model to differentiate between regular words and action tokens. When training the Action Transformer, it is crucial to have an appropriate action representation to effectively capture the dynamics of the system.

3. Attention Mechanism:

The attention mechanism in the Action Transformer plays a vital role in understanding the causal relationships between actions and their consequences. During the self-attention process, the model learns to focus on both the word tokens and the action tokens, enabling it to reason about the cause-effect relationships in the sequence.

4. Training and Inference:

The training procedure for the Action Transformer involves feeding the input sequences with action tokens and corresponding output sequences to the model. The model learns to predict the next state or action based on the provided context. Training typically involves minimizing a suitable loss function, such as cross-entropy, to optimize the model’s parameters.

During inference, the trained Action Transformer can be used to generate predictions for future states or actions given a context. By providing a sequence of actions, the model can predict the resulting system’s state, facilitating various applications in sequential decision-making and dynamic system modeling.

Conclusion:

The Action Transformer model extends the capabilities of the standard transformer architecture to address tasks involving dynamic systems and sequential decision-making. By incorporating actions into the input sequence, the model gains the ability to reason about causal relationships and predict the consequences of actions accurately.

While implementing an Action Transformer may require some adjustments to the standard transformer model, it offers promising opportunities for various real-world applications. As NLP research continues to evolve, the Action Transformer stands as an exciting development that pushes the boundaries of what transformer models can achieve.

To Learn More:- https://www.leewayhertz.com/action-transformer-model/

Leave a comment