In today’s data-driven world, machine learning (ML) has become a crucial tool for businesses seeking to gain a competitive edge. However, the success of ML projects goes beyond just building models; it involves managing the entire ML lifecycle efficiently. That’s where MLOps comes into play. MLOps, short for Machine Learning Operations, is an amalgamation of DevOps principles with ML practices, aiming to streamline the ML lifecycle and improve collaboration among data scientists, engineers, and operations teams. In this article, we will delve into a comprehensive guide on how to implement MLOps effectively.

1. Understanding the ML Lifecycle:

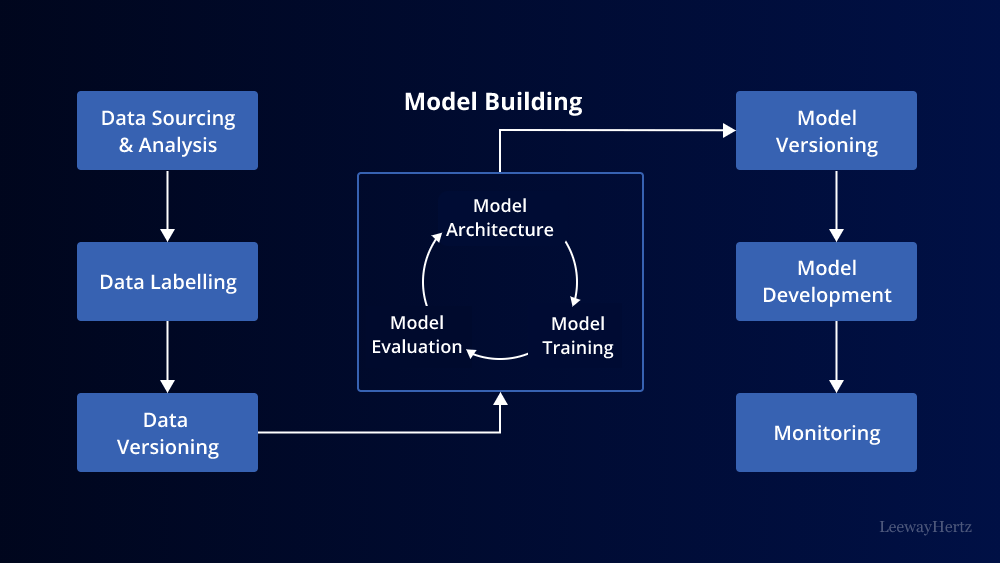

Before diving into MLOps, it is essential to grasp the ML lifecycle stages, which include data collection, data preprocessing, model training, evaluation, deployment, and monitoring. Each stage requires specialized tools and workflows.

2. Collaboration and Communication:

Promote a culture of collaboration and open communication between data scientists, engineers, and operations teams. Regular meetings and shared documentation ensure everyone is on the same page, understanding the project’s objectives, and addressing potential challenges together.

3. Version Control:

Implement version control for ML artifacts such as code, datasets, and models. Git is a popular choice for version control, allowing teams to track changes, collaborate efficiently, and revert to previous states if necessary.

4. Reproducibility:

Achieving reproducibility is vital for ML projects. Record all the dependencies and configurations used in the model training process. This ensures that the same results can be obtained when the model is re-run, avoiding potential discrepancies.

5. Continuous Integration and Continuous Deployment (CI/CD):

Apply CI/CD practices to ML pipelines. Automate the building, testing, and deployment of ML models, reducing the time from development to production.

6. Containerization:

Containerize ML applications using platforms like Docker. Containers encapsulate the model and its dependencies, ensuring consistency across different environments and easing deployment.

7. Orchestration:

Use orchestration tools such as Kubernetes to manage containerized ML applications efficiently. Kubernetes allows scaling, load balancing, and fault tolerance, ensuring high availability and performance.

8. Monitoring and Logging:

Monitor the performance of deployed models and log relevant information for debugging and auditing purposes. Implement tools like Prometheus and Grafana to track metrics and visualize performance.

9. Feedback Loop and Retraining:

Set up a feedback loop to collect data from the model’s performance in production. This data can be used to identify and address issues, and also as new training data to improve the model’s accuracy over time.

10. Security and Privacy:

Ensure that data used for training and in production is handled securely. Implement proper access controls, encryption, and anonymization techniques to protect sensitive information.

11. Model Governance:

Establish a model governance process to manage the lifecycle of models effectively. This includes versioning, deprecation, and documentation to ensure models are consistently updated and retired when necessary.

12. A/B Testing:

Utilize A/B testing to compare different versions of models and determine the most effective one. This helps in making data-driven decisions about model improvements.

13. Collaboration Tools:

Use collaboration tools like Slack, Microsoft Teams, or project management platforms to facilitate communication among team members, allowing for quick updates and issue resolution.

14. Continuous Learning:

Stay updated with the latest developments in ML and MLOps. Attend conferences, workshops, and webinars to learn from experts and understand emerging best practices.

15. Documentation:

Create comprehensive documentation for each stage of the ML lifecycle. This ensures that knowledge is shared across the team and can be referred to in the future for similar projects.

In conclusion, implementing MLOps is essential for successful ML projects. It streamlines the ML lifecycle, enhances collaboration among teams, and ensures the reliability and scalability of ML applications. By following the points outlined in this guide, organizations can improve their ML capabilities, make better data-driven decisions, and ultimately achieve greater success in the dynamic world of machine learning.

To Learn More:- https://www.leewayhertz.com/mlops-pipeline/

Leave a comment