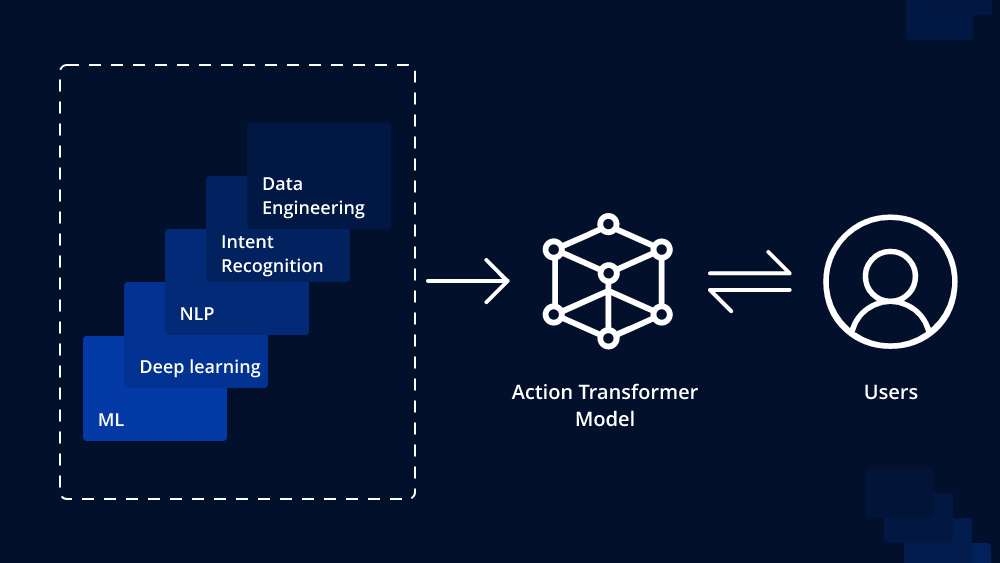

In recent years, transformer-based models have revolutionized the field of natural language processing (NLP) by achieving state-of-the-art results in various tasks such as language translation, sentiment analysis, and text generation. The Action Transformer Model is an extension of the traditional transformer architecture that goes beyond standard language modeling to incorporate dynamic actions, enabling the model to interact with and modify its environment actively. This article delves into the fundamentals of the Action Transformer Model and sheds light on how it operates.

Understanding the Transformer Architecture

Before delving into the Action Transformer Model, let’s briefly recap the basics of the traditional transformer architecture. Transformers are based on the concept of self-attention mechanisms, where the model learns to weigh the importance of different words in a sentence while processing each word. The transformer architecture consists of an encoder-decoder framework with multiple attention layers, allowing it to effectively capture long-range dependencies in text.

The Evolution: Action Transformer Model

The Action Transformer Model extends the transformer architecture to introduce dynamic actions and interactions, making it suitable for a broader range of tasks. Traditional transformers process text in a sequential manner, which can be limiting when dealing with tasks that require interaction or manipulation of the input data. Action Transformers aim to overcome this limitation by actively modifying their input during the processing stage.

Actions and Entities

In the Action Transformer Model, actions are programmable operations that the model can perform on the input data. These actions can include operations like insertion, deletion, and substitution of tokens in the input sequence. Additionally, the model can use learned functions to create new information or query external knowledge sources, enabling it to dynamically acquire relevant information during processing.

Entities refer to the elements in the input sequence that the model can interact with. For instance, in a sentence, words or phrases can be entities that the model performs actions on. By manipulating these entities, the Action Transformer gains more control and adaptability over the input data.

The Action Module

The Action Transformer introduces an action module, which is a crucial component responsible for executing actions on entities. The action module takes the current state of the model, the selected entities, and the specified action as input and applies the action accordingly. This process is iterative, allowing the model to perform multiple actions in sequence.

Dynamic Computation Graph

One of the key features of the Action Transformer is the dynamic computation graph. Unlike traditional transformers with fixed graphs, Action Transformers dynamically construct their computation graphs based on the sequence of actions they perform. This allows the model to adapt its architecture on-the-fly, tailoring it to the specific task and input at hand.

Action Rewards and Reinforcement Learning

To train Action Transformer Models, reinforcement learning techniques are often employed. The model receives action rewards that reflect the quality of the actions taken during processing. These rewards guide the model to learn which actions are beneficial or detrimental to the task at hand. By using reinforcement learning, the model learns to optimize its actions to achieve better results.

Applications of Action Transformers

Action Transformers have shown promising results in various NLP tasks. For instance, in language translation, the model can dynamically modify the source sentence before generating the translation, leading to more accurate and fluent translations. In text summarization, the model can selectively delete or emphasize certain parts of the input text to create more concise summaries.

Conclusion

The Action Transformer Model is an innovative extension of the traditional transformer architecture that introduces dynamic actions and interactions with input data. By incorporating programmable actions and entities, the model gains a higher degree of control and adaptability over the processing of textual information. As research in this area continues to evolve, we can expect Action Transformers to play a crucial role in shaping the future of natural language processing and its applications across various domains.

To Learn More:- https://www.leewayhertz.com/action-transformer-model/

Leave a comment